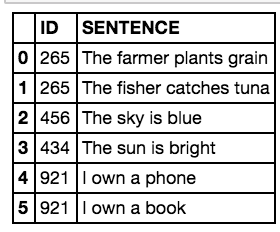

Ok interesting, the average number of words has increased slightly. Print('Average of tokens counted: ' + str(df.mean())) print('Average of words counted: ' + str(df.mean())) Or you look at a case difference if you don’t know which type of algorithm (for example, in normalisation) fits my data better. Of course, this can only be done on a random basis, but it is easy to see whether the function applied had negative effects that were not intended.

The advantage of this is that (especially in later process steps) it is very quick and easy to see what influence the operation has had on the quality of my information. It is always worthwhile (I have made a habit of doing this) to have the number of remaining words or tokens displayed and also to store them in the data record. pd.set_option('display.max_colwidth', 30) df = df.apply(word_tokenize)ĭf = df.str.len()

This setting should be reset at the end, otherwise it will remain. Here I set a limit for the column width so that it remains clear. 4.2.4 Application to the DataFrame df.head()

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed